The past

I started the Bugs Ahoy project in October 2011 partly because I was procrastinating from studying for midterms, but mostly because I saw new contributors being overwhelmed by Bugzilla’s… Bugzilla-ness. I wanted to reduce the number of decisions that new contributors had to make by:

- only showing bugs that match their skills

- only showing bugs that have someone ready to mentor

- presenting only the most useful information needed to make the required contribution

Bugs Ahoy was always something that was Good Enough, but I’ve never been able to focus on making it the best tool it could be. I’ve heard enough positive feedback over the past 7 years to convince me that it was better than nothing, at least!

The future

Bugs Ahoy’s time is over, and I would like to introduce the new Codetribute site. This is the result of Fienny Angelina’s hard work, with Dustin Mitchell, Hassan Ali, and Eli Perelman contributing as well. It is the spiritual successor to Bugs Ahoy, built to address limitations of the previous system by people who know what they’re doing. I was thrilled by the discussions I had with the team while Codetribute was being built, and I’m excited to watch as the project evolves to address future needs.

Bugs Ahoy will redirect automatically to the Codetribute homepage, but you should update any project or language-specific bookmarks or links so they remain useful. If your project isn’t listed and you would like it to be, please go ahead and add it! Similarly, if you have suggestions for ways that Codetribute could be more useful, please file an issue!

]]>You’ll notice that almost every step is in the form “look for X”, followed by “suggest how to improve X”. This is not an accident; I find code review most effective when it is both constructive and collaborative. When a reviewer leaves a comment indicating that the current change is not optimal, this suggests that they have an optimal form in mind. If the reviewer does not (or cannot) describe their ideal outcome, they are setting the author of the changes up for failure.

Sometimes when I review code, I have a feeling that if it were written differently then the result would be better in some respect (maintainability, readability, performance, safety, etc.). When I can articulate this feeling as a concrete change I would like to see, one of several outcomes occurs:

- the author makes the change and agrees with my reasoning

- the author makes the change but disagrees with my reasoning, sparking a discussion about a specific result

- the author explains why my change is inappropriate based on prior experience with the code in question

All of these outcomes are beneficial – either the code is improved, or at least one party ends up learning something new about the code that’s being changed. This is one of the benefits of a collaborative code review process!

Other times when I review code and I feel that a change could be improved in some way, I find that I am having difficulty describing the specific improvement I have in mind. When this happens, I can:

- locally modify the changes under review to see if my idea is feasible

- create a minimal, representative sample of the change and demonstrate the transformation I have in mind

- decide that my idea is not worth the additional effort that would be required to achieve it

Once again, all of these outcomes are beneficial – either I now have concrete feedback that can be discussed, or I have decided that my idea should not be pursued (whether it’s based on incorrect assumptions or not a good use of time).

These are the guidelines that I try to follow as a code reviewer. My role is not merely a gatekeeper, evaluating whether code changes should be merged or not. Instead I am a coach, helping shape the changes into a form that will leave all parties satisfied.

]]>There are three main problems that require solving for any new C API to a Rust library:

- writing low-level bindings to call whatever methods are necessary on high-level Rust types

- writing equivalent C header files for the low-level bindings

- adding automated tests to ensure that all of the pieces work

Low-level bindings:

Having never used telemetry.rs before, I wrote my bindings by looking at the reference commit for integrating the library into Servo, as well as examples/main.rs. This worked out well for me for this afternoon hack, since I didn’t need to spend any time reading through the internals of the library to figure out what was important to expose. This did bite me later when I realized that the implementation for Servo is significantly more complicated than the example code, which caused me to redesign several aspects of the API to require explicitly passing around global state (as can be seen in this commit).

My workflow here was to categorize the library usage that I saw in Servo, which yielded three main areas that I needed to expose through C: initialization/shutdown, definition and recording of histogram types, and serialization. In each case I sketched out some functions that made sense (eg. count_histogram.record(1) became unsafe extern "C" fn telemetry_count_record(count: *mut count_t, value: libc::uint)) and wrote placeholder structures to verify that everything compiled.

Next I implemented type constructors and destructors, and decided not to expose any Rust structure members to C. This allowed me to use types like Vec<T> in my implementation of the global telemetry state, which both improved the resulting API ergonomically (many fewer _free functions are required) and allowed me to write more concise and correct code. This decision also allowed me to define a destructor on a type exposed to C; this would usually be forbidden due to changing the low-level representation of the type in ways visible to C if the structure members were exposed. Generally these API methods took the form of the following:

#[no_mangle]

pub unsafe extern "C" fn telemetry_new_something(telemetry: *mut telemetry_t, ...) -> *mut something_t {

let something = Box::new(something::new(...));

Box::into_raw(something)

}

#[no_mangle]

pub unsafe extern "C" fn telemetry_free_something(something: *mut something_t) {

let something = Box::from_raw(something);

drop(something);

}

The use of Box in this code places the enclosed value on the heap, rather than the stack, which allows us to return to the caller without the value being deallocated. However, because C deals in raw pointers rather than Rust’s Box type, we are forced to convert (ie. reinterpret_cast) the box into a pointer that it can understand. This also means that the memory pointed to will not be deallocated until the Rust code explicitly asks for it, which is accomplished by converting the pointer back into an owned Box upon request.

Once I had meaningful types, I filled out the API methods that were used for operating on them. The types used in the public API are very thin wrappers around the full-featured Rust types, so the step was mostly boilerplate like this:

#[no_mangle]

pub unsafe extern "C" fn telemetry_record_flag(flag: *mut flag_t) {

(*flag).inner.record();

}

The code for serialization was the most interesting part, since it required some choices. The Rust API requires passing a Sender<JSON> and allows the developer to retrieve the serialized results at any point in the future through the JSON API. In the interests of minimizing the amount of work required on a Saturday afternoon, I chose to expose a synchronous API that waits on the result from the receiver and returns the pretty-printed result in a string, rather than attempting to model any kind of Sender/Receiver or JSON APIs. Even this ended up causing some trouble, since telemetry.rs supports stable, beta and nightly versions of Rust right now. Rust 1.5 contains some nice ffi::CString APIs for passing around C string representations of Rust strings, but these are named differently in Rust 1.4 and don’t exist in Rust 1.3. To solve this problem, I ended up defining an opaque type named serialization_t which wraps a CString value, along with a telemetry_borrow_string API function to extract a C string from it. The resulting API works across all versions of Rust, even if it feels a bit clunky.

C header files

The next step was writing a header file that matched the public types and functions exposed by my low-level bindings (like an inverse bindgen). This was a straightforward application of writing out function prototypes that match, since all of the types I expose are opaque structs (ie. struct telemetry_t;).

The most interesting part of this step was writing a C program that linked against my Rust library and included my new header file. I ported a simple Rust test from one I added earlier to the low-level bindings, then wrote a straightforward Makefile to get the linking right:

CC ?= gcc CFLAGS += -I../../capi/include/ LDFLAGS += -L../../capi/target/debug/ -ltelemetry_capi OBJ := telemetry.o %.o: %.c $(CC) -c -o $@ $< $(CFLAGS) telemetry: $(OBJ) $(CC) -o $@ $^ $(LDFLAGS)

This worked! Running the resulting binary yielded the same output as the test that used the C API from Rust, which seemed like a successful result.

Automated testing

Following html5ever's example, my prior work defined a separate crate for the C API (libtelemetry_capi), which meant that the default cargo test would not exercise it. Until this point I had been running Cargo from the capi subdirectory, and running the makefile from the examples/capi subdirectory. Continuing to steal from html5ever's prior art, I created a script that would run the full suite of Cargo tests for the non-C API parts, then run cargo test --manifest-path capi/Cargo.toml, followed by make -C examples/capi, and made Travis use that as its script to run all tests.

These changes led me to discover a problem with my Makefile - any changes to the Rust code for the C API would not cause the example to be rebuilt, so I didn't actually have effective local continuous integration (the changes still would have been picked up on Travis). Accordingly, I added a DEPS variable to the makefile that looked like this:

DEPS := ../../capi/include/telemetry.h ../../capi/target/debug/libtelemetry_capi.a Makefile

which causes the example to be rebuilt any time any of the C header, the underlying static library, or the Makefile itself are changed. The result is that whenever I'm modifying telemetry.rs, I can now make changes, run ./travis-build.sh and feel confident that I haven't inadvertently broken the C API.

Note: this only works if searching for the mentor tag in the whiteboard yields a unique result. If the tag reads mentor=ashish and there’s an another account with :ashish_d, the result will not be unique so no relevant header value or footer will be applied to any messages sent to Ashish.

Please make use of this! There is no longer a good excuse for questions in mentored bugs to go unanswered or overlooked; let’s make sure we’re providing the best possible mentoring experience to new contributors that we can.

]]>Here’s the plan:

- The task board will scrape data from an empty github repository’s issues list

- These issues will be tagged in broad, useful ways (marketing, design, writing, mozilla hispano, whatever)

- New contributors with skills other than writing code will be able to browse the tasks available and get involved easier and quicker than ever before!

The main work that needs to be done falls into two categories:

- Authenticating with github (please help, my brain just falls apart when I try to read about OAuth)

- Creating a page that allows creating new tasks/modifying existing ones through the Github API

We want to avoid any need for users of the task board (both task creators and browsers) to ever visit Github; it’s just serving as a convenient backend to store the data. I’m snowed under with too many projects right now and can’t focus on this right now, but I would be thrilled to work with someone (or several someones!) to get it done. This is going to be a very useful tool when it’s finished, we just need some elbow grease to crank out a prototype. If you have experience with Javascript and/or Python, please get in touch! You can find the source on github.

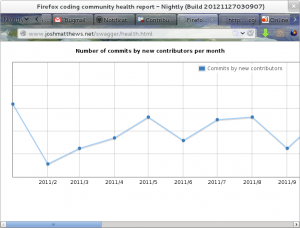

]]>Measuring the size of a whole community is hard, so I’m not tackling that problem yet. Instead, I’ve focused on the problem of making first-time contributions more visible, and measuring the rate of incoming new contributors in a methodical way. I decided to extract the commit data of mozilla-central into a database which I could query in useful ways; in particular I store a table of committers that includes a name, email address, number of commits, date of first commit, and date of last commit. You can see the raw results of my work at my github repository, where I’m banging away at multiple tools that use this data in different ways.

Tool the first – the Firefox coding community health report:

This measures the number of first patches that are committed in any given month. This graph allows us to see how effective we are at shepherding new contributors through the process of shipping their first change to the code.

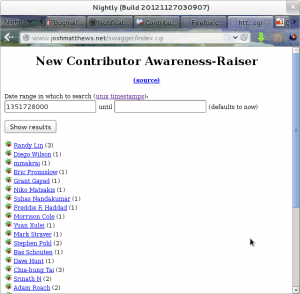

Tool the second – the first-time contributor awareness raiser:

This tool lists the contributors whose first patch was committed in a given period of time. With this, we can create reports that will allow us to take actions that celebrate this accomplishment (blog posts, swag, announcements in meetings, submissions for about:credits, etc.). This will allow us to take the burden of these tasks off of the engineers who can’t see beyond the patch, and place it in the hands of people who have a broader vision of building community effectively.

I’m not finished yet! I’m also really interested in measuring how many contributors drop off in a given month – by combining this with the incoming contributor data, we can get rough data about churn in the community, and compare how many people are leaving vs. how many newcomers we have. There are many more interesting measurements to be taken here, and I’m excited to be digging into this data. Feel free to join me! The github repo contains the script to create the database from the git mozilla-central repository, and you’re welcome to explore the data with me.

]]>Take some time every day to clear your disk cache, then put your browser into private browsing mode. If you want to use it for traditionally-private things, that’s cool, it just may be slightly more embarrassing to thoroughly report bugs you encounter. What would be very helpful is if you could spend some time using your browser for regular, non-private things, and every so often open up about:cache and look through the entries in your disk cache. If you see any entry that looks like it came from one of the sites you were visiting, file a bug and CC :jdm and :ehsan. In fact, I will even accept comments on this post, or IRC pings, or emails, or whatever – these are important privacy failures that need to be addressed before FF 18 goes out the door. Do your part to make it unremarkable!

]]>All I ask is that you link to a document that has some kind of instruction for how to get involved. That’s it!

]]>